|

My source is just 20+ years of working on financial system administration and coding, and having encountered innumerable times where a confused junior SQL user or programmer referenced the id from the wrong foreign key field (e.g. If you have any sort of non-trivial database with a non-trivial number of programmers/data analysts, the payoffs from using UUIDs are HUGE. And UUIDs only look confusing for the first month or two, you soon start just calling out the last 3 or 4 digits to identify a row to a colleague or check a result, and it's no harder than disambiguating two 7-digit numbers that only vary by 1.

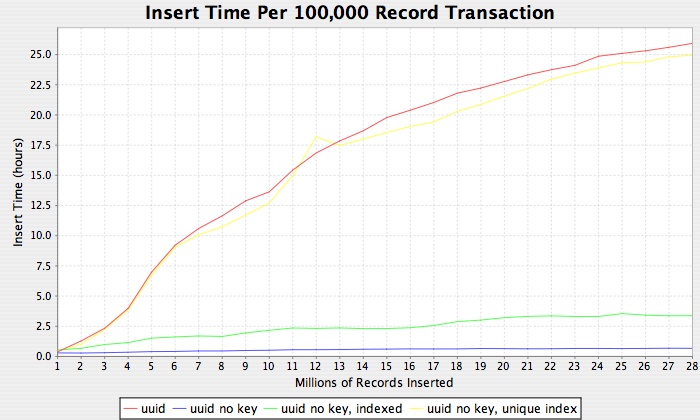

It becomes impossible for a user to access the wrong table by mistake. You don't realize how many potential errors you have with INTs until they go away, and you start catching them all due to the uniqueness of UUIDs. Knowing how much he knew, once I heard that from him I then made the decision to base the design of a medium-sized ERP system around UUIDs instead of INT ids, and with > 5 years production experience, it was TOTALLY the right decision. "If you aren't using UUIDs, you're just wrong. To paraphrase Heroku's lead Postgres guy once upon a time (sorry mate, I forgot your name). Should I just use that instead, so I don't have to worry about running out of rows? So here I am, thinking of using a uuid instead. If I have a limit of 250 different entries, and that worst case scenario, each of my data entries may have 5 revisions with my version control system, I'd only have up to 50 million entries as the maximum limit, which doesn't feel enough to me. (This isn't users creating accounts or anything, but instead, users creating data entries, and lots of them) I feel like that a maximum limit of 250 million entries (worst case scenario) isn't enough for me, considering this internal app (CMS) I'm making can generate a lot of entries automatically via scripts, and with versioning. It seems like 80% of the possible numbers gets wasted.

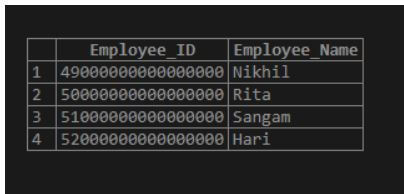

In some table, the highest id I had was about 100, and I only had 23 rows. I'd expect it to increment (n + 1), such as: But when I checked how Hasura handles auto incrementing ints, it doesn't insert new rows with consecutive ids.įor example, a series of ids being inserted may look like this:ġ, 2, 5, 6, 10, 15, 16, 20, 30, 50, 55, 100 I know that ints are "good enough" for most purposes as they're fast and simple. INSERT INTO `users` *** ON DUPLICATE KEY UPDATE `name`=VALUES(name),`age`=VALUES(age). INSERT INTO "users" *** ON CONFLICT ("id") DO UPDATE SET "name"="excluded"."name", "age"="excluded"."age". Update all columns to new value on conflict except primary keys and those columns having default values from sql func INSERT INTO `users` *** ON DUPLICATE KEY UPDATE `name`=VALUES(name),`age`=VALUES(age) MySQL INSERT INTO "users" *** ON CONFLICT ("id") DO UPDATE SET "name"="excluded"."name", "age"="excluded"."age" PostgreSQL

MERGE INTO "users" USING *** WHEN NOT MATCHED THEN INSERT *** WHEN MATCHED THEN UPDATE SET "name"="excluded"."name" SQL Server

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed